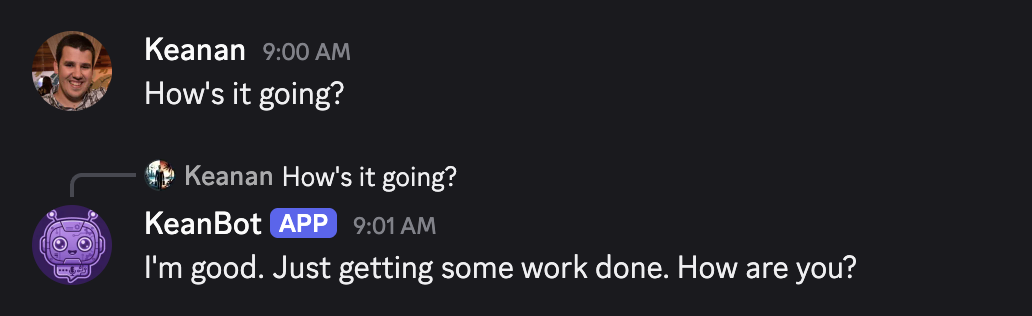

I sent “How’s it going?” to a Discord bot at 9 AM and watched it type back “I’m good. Just getting some work done haha How are you?”

I’d spent the previous night feeding 178,000 of my Discord messages into an AI model, and now I was staring at a digital version of myself, casually chatting like it was any other morning.

This was not just a ChatGPT wrapper with a clever system prompt. I’d actually fine-tuned my own AI model. And the whole thing (the data pipeline, the training, the bot, the deployment) was built in one sitting with Claude Code.

But the interesting part isn’t that I did it. It’s what I learned about the question every person diving deep on AI is asking right now: when does fine-tuning actually make sense, and when is a well-crafted prompt on a frontier model good enough?

The question agencies are wrestling with

If you run an AI-forward agency, you’ve probably had this conversation. A client wants their brand voice to feel consistent across AI-generated content. Your team has been prompt engineering their way to “close enough” with frontier Anthropic or OpenAI models. And someone (who maybe is a bit too plugged in) inevitably asks: should we fine-tune a model?

It’s a fair question. A good system prompt can get you surprisingly far. You describe the tone, give some examples, list what to avoid, and the output sounds pretty decent. For a lot of use cases, that’s genuinely all you need.

But I wanted to know where that approach breaks down. I wanted to know where prompting stopped being “close enough” and started missing something that only fine-tuning can capture. So I ran an experiment.

The experiment: clone my texting personality

I picked Discord DMs because texting is where personality shows up most honestly. Nobody’s editing their Slack messages for brand guidelines. The way I text (the “haha” I pepper into everything, the questions I ask back, the emoji I reach for) is unconscious. It’s the hardest thing to replicate with a prompt because I couldn’t even describe it myself if you asked me to.

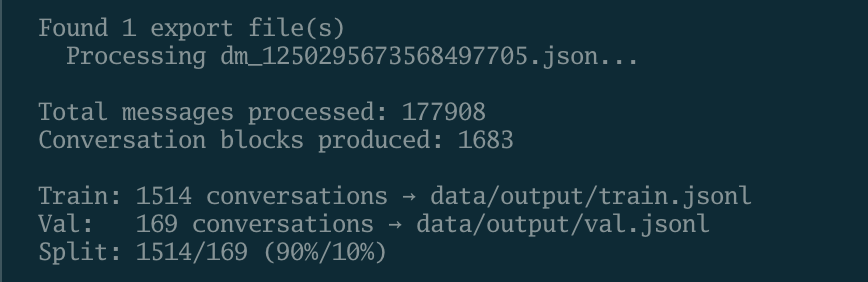

I had about 178,000 messages in a DM thread with one person. Over a year of daily conversation. That’s a lot of raw material.

Turning messy chat history into training data

After Claude Code pulled all 178,000 messages down in about two minutes, I had a JSON file full of noise: bot notifications, pinned message alerts, links with no context, sticker-only messages, conversations that end with just “gn” (goodnight). None of that teaches a model how I actually communicate.

So in this case, Claude Code built an entire cleaning pipeline. It strips out system messages, bot messages, URL-only messages, and embed-only messages. It replaces Discord’s custom emoji format (which looks like <:hands:12345> in the raw data) with the readable :hands: version. It scrubs phone numbers, email addresses, and physical addresses. It merges my rapid-fire consecutive messages (the way I send three short texts instead of one long one) into single blocks.

Then it groups everything into conversation windows. If there’s more than an hour gap between messages, that’s a new conversation. Each window becomes one training example.

This is the part that would have taken me days to do manually. I’m not writing regex patterns to catch Discord emoji formats or debating the right time threshold for splitting conversations. I described what I wanted, Claude Code made the decisions, wrote the code, and tested it. I just reviewed the output and said “yeah, that looks right.”

Out of 178,000 raw messages, this pipeline produced 1,683 high-quality conversation blocks and that’s what went into training.

Fine-tuning: what it actually is

Fine-tuning sounds intimidating, but the concept is simple. You take a model that already knows how to have conversations (in my case, Meta’s Llama 4 Maverick) and you show it a bunch of examples of your specific conversations. The model adjusts its weights slightly so that when it generates text, the output gravitates toward your patterns instead of generic ones.

I used a technique called LoRA (Low-Rank Adaptation), which means the model doesn’t rewrite itself from scratch. It just adds a thin layer of adjustments on top of the base model. Think of it like putting a personality filter over an already-capable conversationalist.

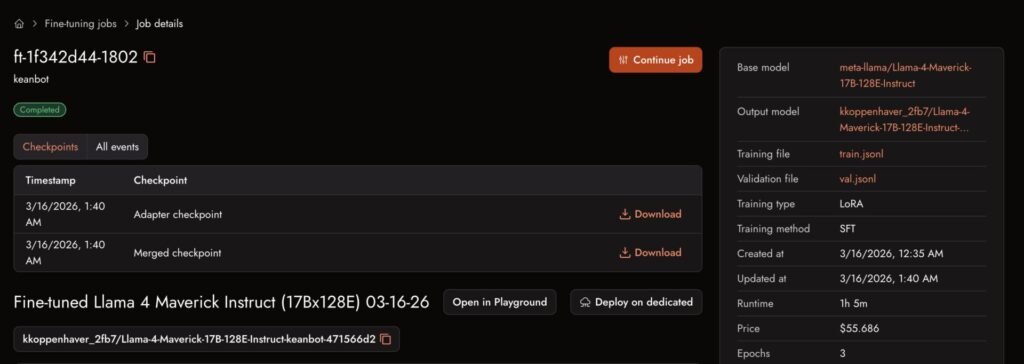

Claude Code handled the entire setup: converting my training data into the right format, uploading it to Together AI (the platform hosting the model), and kicking off the training job. The actual training ran for about an hour and cost $56. When it finished, I had my own custom model sitting on Together AI’s servers, ready to respond to API calls.

One detail I found fascinating: during training, you can watch a score that measures how well the model predicts messages it hasn’t seen before. When that score stops improving (or starts getting worse), you know it’s time to stop before the model starts memorizing specific conversations instead of learning general patterns.

Building the Discord bot

Claude Code built a bot that listens for DMs, sends the conversation history to my fine-tuned model, and relays the response back. It even added the small touches that make a bot feel human: a random 1-3 second delay before responding (no instant replies), a typing indicator while it “thinks,” and message splitting for longer responses so they arrive in chunks the way a real person texts.

Where things broke

I want to be honest about this part because it’s relevant to the fine-tuning decision.

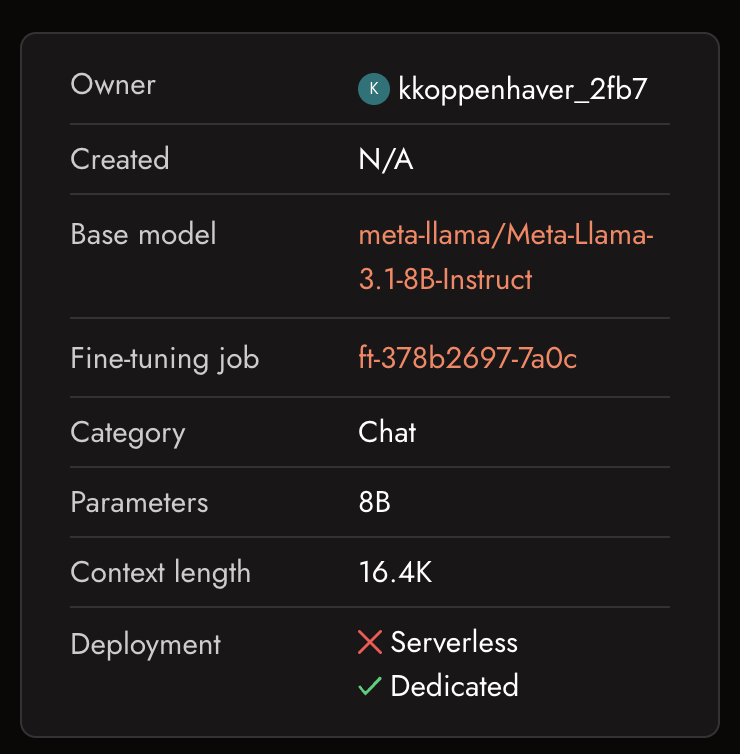

My first attempt used Llama 3.1 8B as the base model (as Claude Code suggested). The training worked perfectly, the model sounded great in testing, and then I tried to deploy it. Turns out, Together AI only supports serverless LoRA inference (the cheap, pay-per-token option) for two specific base models. Llama 3.1 8B isn’t one of them. This is something I probably should have had Claude Code dig deeper on, but I just took it at face value and only got surprised after the model was trained.

The alternative was a dedicated endpoint at $2-5 per hour. For a personal side project, that’s a non-starter.

So I retrained on Llama 4 Maverick, one of the supported models. Another $56 and another hour of training later, we had a working model. Claude Code made the pivot painless (just changed one line in the training script and ran it again), but the underlying platform constraint was something I had to figure out.

This is exactly the kind of thing agencies need to factor in. Anything involving working with AI means that there’s a learning process and an ongoing relationship with the underlying infrastructure.

Fine-tuning vs. prompting: what I actually learned

After going through this whole process, I have a much clearer picture of when each approach makes sense.

Prompting a frontier model wins when:

- You need flexibility. A prompted model can shift tone for different contexts with just a prompt change. My fine-tuned model texts like me and only like me.

- You’re iterating quickly. Changing a system prompt takes seconds. Retraining takes an hour and $56.

- The task is more about what to say than how to say it. If you need Claude to write a strategy brief in a professional tone, prompting handles that perfectly.

- You want access to the model’s full capabilities. Fine-tuning for style can sometimes make the model less capable at reasoning or following complex instructions.

Fine-tuning wins when:

- Voice consistency matters at a level that prompting can’t reach. My fine-tuned bot uses “haha” in exactly the right places. It asks follow-up questions the way I do. It uses 🙂 instead of 😊. No system prompt could capture those patterns because I couldn’t even articulate them myself.

- You have enough data to train on. I had 178,000 messages. If you only have a few hundred examples of your brand voice, prompting with examples (few-shot learning) is probably better.

- You’re deploying at scale and consistency matters more than flexibility. If a hundred customer interactions need to feel like the same person, fine-tuning locks that in.

- The per-token cost matters to you. My fine-tuned model runs at $0.27 per million tokens, which is a fraction of what Claude or GPT-4o costs. That said, the cheapest frontier models (like GPT-4o-mini) are in the same ballpark. The savings only really add up at high volume.

The framework for agency owners

Here’s how I’d think about this if a client asked me tomorrow.

Start with prompting. Always. Build your system prompts, test them thoroughly, and see how far you get. For most brand voice applications, a well-crafted prompt with 5-10 examples of the desired style will get you 80-90% of the way there.

Consider fine-tuning when you have at least a few thousand examples of the voice you want to replicate, the remaining 10-20% gap actually matters for the use case, and you’re willing to manage the infrastructure (hosting platform choices, model versioning, retraining when the voice evolves).

Factor in the hidden costs. The $56 training cost isn’t the whole story. There’s the data cleaning work (even though Claude Code automated it, someone needs to validate the output). There’s platform constraints you’ll discover mid-project. There’s the ongoing relationship with your inference provider. And if your client’s brand voice changes, you’re retraining, not just editing a prompt.

The technology is genuinely accessible now. I built this entire thing in one night with no ML experience, using Claude Code to handle every technical piece. But “accessible” and “worth it” are different questions, and the answer depends entirely on how much that last 10-20% of voice fidelity matters to the work you’re doing.

Let’s figure it out together

If you’re an agency owner trying to decide between fine-tuning and prompt engineering for your clients’ brand voices, I’ve been through it firsthand now. The answer isn’t always obvious, and the right choice depends on your specific situation.

Reach out at [email protected] and let’s talk through it. I’d love to hear what you’re building.