I keep seeing the same pattern with agency owners and AI.

Someone scrolls LinkedIn and sees a post about an automated pipeline that generates 50 Instagram ads a day across ten channels. They think “I need to do that.” So they sign up for four tools, spend a weekend experimenting, hit a wall because nothing works like the demo, and by Monday they’re frustrated. And a month later they’re not using any of it and talking about how the “technology just isn’t there yet”.

This happens constantly. The agency owner who tried everything and burned out is way more common than the one who quietly integrated AI into their workflow and never looked back. But this isn’t because they’re not motivated to or they’re not ambitious enough. There’s just a knowledge and experience gap that you can’t shortcut by going all in on day one.

I’ve been working with agency owners on this for a couple of years now, and the ones who actually stick with AI and get real value from it all follow a similar progression. The general progression isn’t proprietary, but I’ve adapted it for how I see agencies specifically AI tooling successfully.

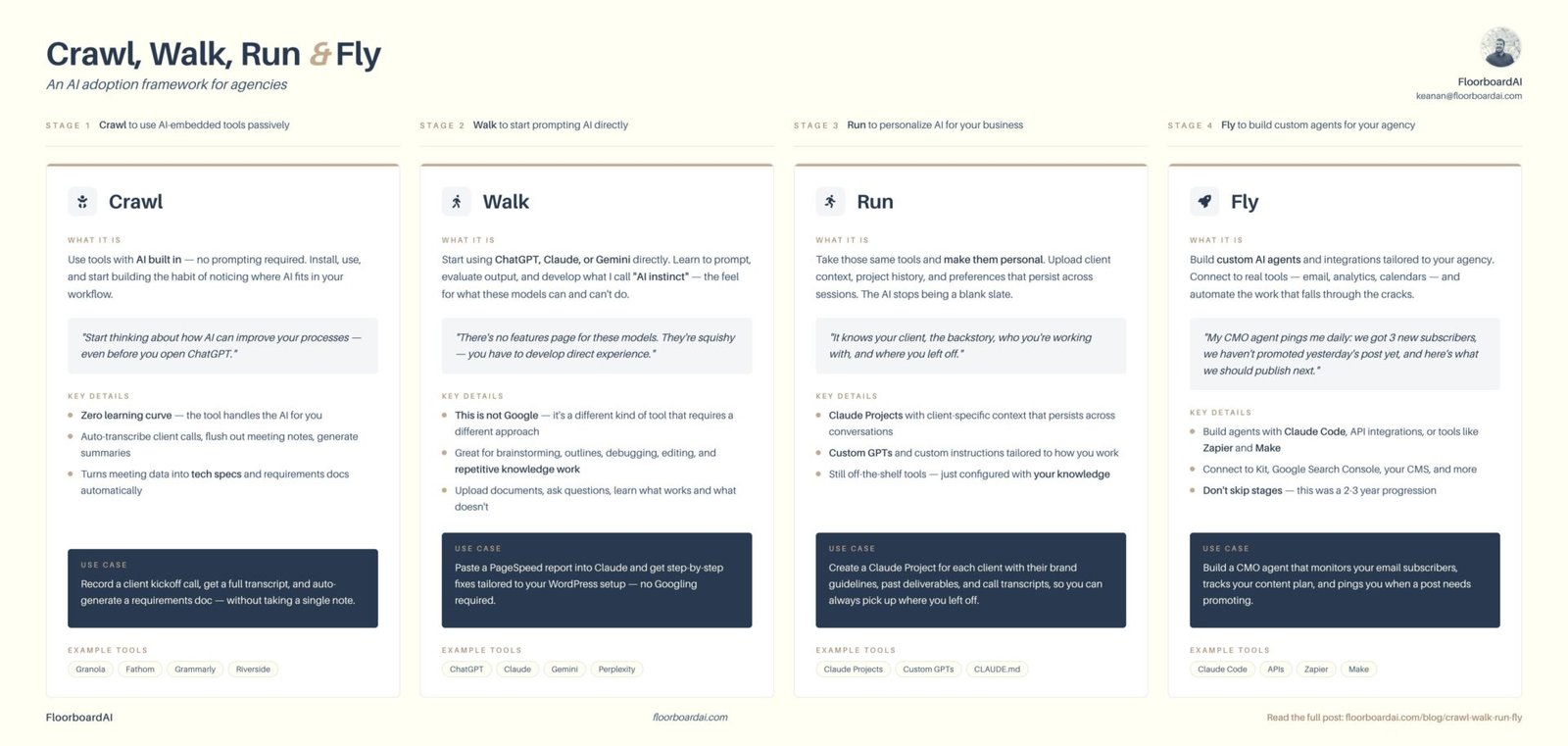

I call it the Crawl, Walk, Run, Fly framework and it will walk you through four different stages of AI adoption to help you start to use this new technology in your business.

I put together a visual reference that covers all four stages with example tools, use cases, and key details at each level. If you want the quick version to save or share with your team, you can grab it here.

Download the Crawl, Walk, Run, Fly infographic →

Let’s take a closer look at each of the four phases.

Crawl: use AI-powered tools without prompting

In the crawl stage, we’re not going off the deep end with advanced AI prompting techniques or learning how LLMs work under the hood. We’re just installing tools that have AI built in and starting to use them. This gives you all the benefits of AI tooling, without having to spend a ton of time on configuration before you see any benefit.

My go-to recommendation is for my clients to start transcribing all you calls. I use Granola, but Fathom and a bunch of others do the same thing. You install it, it runs during your calls, and when the meeting’s over you have a full transcript with notes, action items, and a summary. The tool handles all of the AI functionality behind the scenes and you get a permanent record of the meeting.

The second part is just as important: keeping all this new data organized. Set up per-client folders in Google Drive (or wherever you keep things), name the files with the date of the call so you can actually find them later, and start building a habit of putting that data somewhere structured.

The value of this compounds over time. Six months of organized call transcripts become a searchable knowledge base that you can query with any tool you decide to use down the road. And even without the AI angle, you’re cleaning up your operations. Most agency owners don’t realize how much they’re bouncing between tools without any organized data until they start thinking about it through this lens.

And even though you may not technically be “doing AI” yet, you’re building the data foundation that will help everything else actually work.

Walk: start prompting AI directly

This is where you open ChatGPT or Claude and start having conversations with it. Not the fancy stuff yet. Just getting comfortable asking questions and evaluating what comes back.

Start with the kinds of things you’d normally Google or ask a coworker. “Help me write a follow-up email to a prospect who went quiet.” “Explain what a reverse proxy does in a way I can tell my client.” Use it for deep research on a topic you need background on before a pitch. Ask it to brainstorm blog post ideas for a client’s content calendar. Have it proofread a proposal before you send it.

These are low-stakes, high-frequency tasks where you start to build a feel for how these tools think and where they’re useful. That matters more than it sounds, because it’s the foundation for everything that comes next.

In this stage, it can also be helpful to ask the LLM about things that you know a lot about, so you can actually judge the quality of the answers, this will start to give you a better idea on whether how you’re prompting it is working well or whether you might need to change directions.

Once you’re comfortable with that back-and-forth, you can start giving it more to work with. And if you’ve already been through the Crawl stage, you already have something most people don’t: organized data.

You could take a group of client call transcripts, dump them into Claude, and ask something like “what upsell opportunities am I missing?” Or you could put your last five sales calls in and ask for a performance review on how you handled objections. This is dramatically more useful than opening a blank chat window and asking a generic question, because you have real context to give it.

Where people get tripped up

The most common mistake I see at this stage: people don’t define what a “successful” response looks like. If you’re asking it to analyze your sales calls, what kind of feedback are you hoping to get? Missed opportunities? Feature selling? How you responded to objections? A one-sentence prompt won’t give you good results, even with great transcript data.

I coach people to think about it like explaining a problem to a very smart employee who just started. They have all the knowledge in the world, but you need to show them how to do a good job so they know how to apply that knowledge to your specific situation.

Developing your “AI instinct”

This is also where you start developing what I call “AI instinct.” Unlike traditional software where you can go to a pricing page and see every feature listed out, these models are squishy. They’re great at things that seem like they should be really hard and terrible at things that seem easy. You can’t learn that from a blog post. You have to use the tools, notice the patterns, and build a feel for what works and what doesn’t.

Run: make AI personal to your business

Once you’re using ChatGPT or Claude regularly, you’ll notice something: you’re always re-explaining the same context. Every prompt starts with “I’m working with a client who…” or “our tech stack is…” or “the project goals are…” You’re typing the same background information over and over.

That repetition is the signal that you’re ready for the “Run” stage.

Claude has the concept of Projects, ChatGPT has Custom GPTs, and most of the other tools have something similar. The idea is the same: you upload a bunch of information about a specific client or project, and any chats inside this project have this background information accessible in every chat for additional context.

To get started, I tell people to upload the original project brief or SOW, as many call transcripts as they have, and something that captures the current state of the project (a JIRA board export, recent email updates, whatever you’ve got). All of this then becomes base knowledge that any chat within this project can pull from.

You’ll quickly notice that the quality of the output changes. Instead of generic suggestions, you start getting responses that are specific to your client’s situation, their tech stack, and their actual needs, without having to explain all of that every time. It’s the same off-the-shelf tools you were already using, just configured with your knowledge.

Fly: build custom agents for your agency

This is where you start building AI tools that are tailored to your specific needs. This means moving from generic “off the shelf” ChatGPT, Claude or Gemini and using the underlying models inside custom tools that the AI labs will never build for you.

As an example, I’ve been “hiring” (building) AI agents for jobs that need doing but that I can’t justify a salary for yet.

Every agency has process-driven work that needs to happen (lead gen, content planning, research, etc) that you can’t hire someone full-time for, but you also can’t do it all yourself. AI agents fit perfectly in that gap.

I built myself a CMO agent that has access to my email subscribers in Kit, my Google Search Console data, and the content plan I’ve put together for my blog. Every day it pings me with a status update: here’s our subscriber count, we still haven’t promoted yesterday’s post, and based on our content plan, this is what we should probably publish next, etc. Before I built this, I’d put together a whole plan for a blog, publish hard for a week, and then completely fall off. The agent keeps me accountable in a way I wasn’t able to do for myself and it’s able to query live data to adjust the plan as we go.

This type of tool-building keeps certain kinds of tasks from being pushed to the back burner and can be a real differentiator for especially smaller agencies as they try to grow.

The important thing about this stage is that building custom tools requires a ton of base knowledge. Which models do you prefer for which jobs? How do you manage context so the agent has what it needs? How do you define what a “good” output looks like so you can actually verify what it gives you? These are all things you learn at the earlier stages. Without that foundation, the “Fly” stage gets overwhelming fast.

Don’t skip stages

The biggest mistake I see is someone watching a cool agent demo and deciding they need to build one immediately. They jump straight to the “Fly” stage without the base knowledge from “Crawl”, “Walk”, and “Run”, and it falls apart. They don’t know which models to use, how to structure their prompts, or how to tell if what they’re getting back is actually good. It gets overwhelming and they give up, which is the same burnout cycle we started with.

Getting to the point where I’m building a custom CMO agent has been a 2-3 year progression for me. The tools are making it faster for everyone now, and I don’t think it needs to take that long anymore (especially if you’re willing to get some help implementing this process), but the instinct and understanding you build at each stage is genuinely what makes the next one work. You can’t skip the reps.

Where are you right now?

If you haven’t started at all: install Granola (or a similar tool), start transcribing your client calls, and get them organized. That’s it. Don’t worry about ChatGPT or agents or any of the rest of it yet.

If you’re already prompting AI but getting generic results: think about where you’re re-explaining the same context over and over. Set up your first Claude Project for your most active client and see how the answers change.

If you’re already building agents or custom tools: make sure you’re not outrunning your own understanding. The agents are only as good as the instructions you give them, and those instructions come from experience you can only build by going through the earlier stages.

Wherever you are, the path forward is the same: one stage at a time, building on what you learned in the last one.

Want a quick-reference version of this framework? I made a one-page infographic with all four stages, example tools, and use cases. [Download it here →]

If you want someone to help you figure out where you are and what to focus on next, that’s exactly what I do here at FloorboardAI. Reach out anytime: [email protected].